|

10/11/2021 0 Comments Docker For Mac Kafka

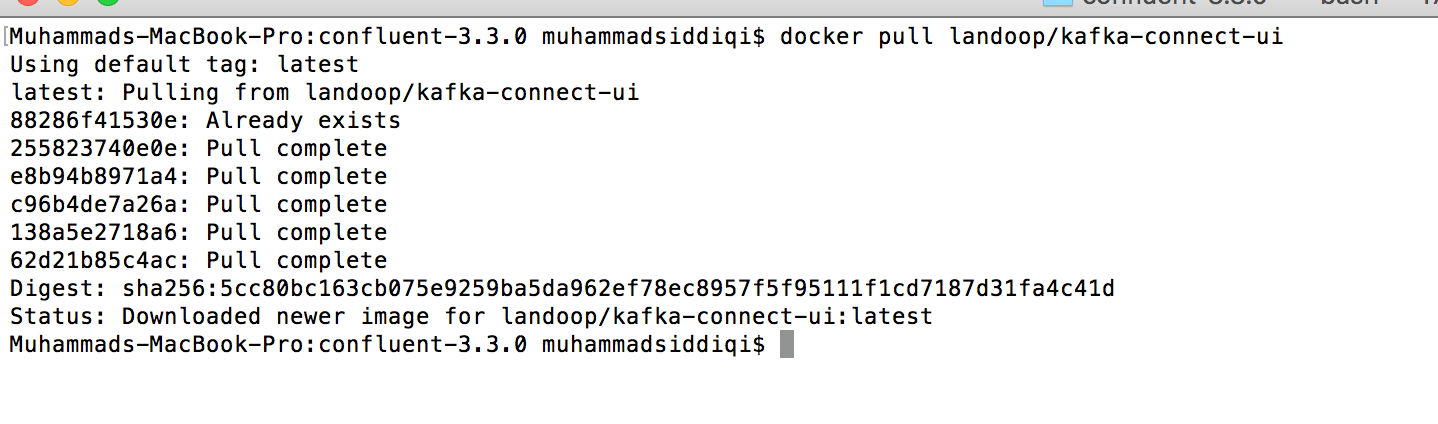

Example, if I used the option for ELK + Kafka with no license and no alerting and I. You can run both the Bitmami/kafka and wurstmeister/kafka images locally using the docker-compose config. There are two popular Docker images for Kafka that I have come across: I chose these instead of via Confluent Platform because they’re more vanilla compared to the components Confluent Platform includes. For example, on Mac OS X or Windows 10 running Docker.Running Kafka locally with Docker. If your cluster is accessible from the network, and the advertised hosts are setup correctly, we will be able to connect to your cluster. Using a registry to decouple schemas from messages in an event streaming analytics architecture IntroductionConnecting to Kafka under Docker is the same as connecting to a normal Kafka cluster.Likewise, we constructed similar structs for CSV-format data files we read from and wrote to Amazon S3. In that post, we serialized and deserialized messages to and from JSON using schemas we defined as a StructType ( pyspark.sql.types.StructType) in each PySpark script. We consumed messages from and published messages to Kafka using both batch and streaming queries.

Spark provides an optimized engine that supports general execution graphs ( aka directed acyclic graphs or DAGs). Spark provides high-level APIs in Java, Scala, Python (PySpark), and R. Apache SparkApache Spark, according to the documentation, is a unified analytics engine for large-scale data processing. Note the addition of the registry to the architecture for this post’s demonstration TechnologiesIn the last post, Getting Started with Spark Structured Streaming and Kafka on AWS using Amazon MSK and Amazon EMR, we learned about Apache Spark, Apache Kafka, Amazon EMR, and Amazon MSK.In a previous post, Hydrating a Data Lake using Log-based Change Data Capture (CDC) with Debezium, Apicurio, and Kafka Connect on AWS, we explored Apache Avro and Apicurio Registry. We will also use the registry to store schemas for CSV-format data files. We will store the Avro-format Kafka message’s key and value schemas in Apicurio Registry and retrieve the schemas instead of hard-coding the schemas in the PySpark scripts. Apache AvroApache Avro describes itself as a data serialization system. In short, Structured Streaming provides fast, scalable, fault-tolerant, end-to-end, exactly-once stream processing without the user having to reason about streaming. The Spark SQL engine will run it incrementally and continuously and update the final result as streaming data continues to arrive. You can express your streaming computation the same way you would express a batch computation on static data. Interest over time in Apache Spark and PySpark compared to Hive and Presto, according to Google Trends Spark Structured StreamingSpark Structured Streaming, according to the documentation, is a scalable and fault-tolerant stream processing engine built on the Spark SQL engine.

Amazon EMR is a fully managed AWS service that makes it easy to set up, operate, and scale your big data environments by automating time-consuming tasks like provisioning capacity and tuning clusters.Amazon EMR on EKS, a deployment option for Amazon EMR since December 2020, allows you to run Amazon EMR on Amazon Elastic Kubernetes Service (Amazon EKS). Amazon EMRAccording to AWS documentation, Amazon EMR ( fka Amazon Elastic MapReduce) is a cloud-based big data platform for processing vast amounts of data using open source tools such as Apache Spark, Hadoop, Hive, HBase, Flink, Hudi, and Presto. Still, it is often a better architectural pattern to register schemas in an external system and then reference them from each application.It is often a better architectural pattern to register schemas in an external system and then reference them from each application. Of course, schemas can be packaged in each application. According to Apicurio, in a messaging and event streaming architecture, data published to topics and queues must often be serialized or validated using a schema (e.g., Apache Avro, JSON Schema, or Google Protocol Buffers). Interest over time in Apache Avro compared to Parquet and ORC, according to Google Trends Apicurio RegistryWe can decouple the data from its schema by using schema registries such as Confluent Schema Registry or Apicurio Registry. Best video edit software for macPrerequisitesSimilar to the previous post, this post will focus primarily on configuring and running Apache Spark jobs on Amazon EMR. With Amazon MSK, you can use native Apache Kafka APIs to populate data lakes, stream changes to and from databases, and power machine learning and analytics applications. According to AWS documentation, Amazon MSK is a fully managed AWS service that makes it easy for you to build and run applications that use Apache Kafka to process streaming data. Amazon MSKApache Kafka clusters are challenging to set up, scale, and manage in production. Apache KafkaAccording to the documentation, Apache Kafka is an open-source distributed event streaming platform used by thousands of companies for high-performance data pipelines, streaming analytics, data integration, and mission-critical applications. Amazon EKS container or an EC2 instance with the Kafka APIs installed and capable of connecting to Amazon MSK Amazon MSK cluster (using IAM Access Control) Amazon S3 bucket (holds all Spark/EMR resources) Docker Kafka Code For ThisObjectiveWe will run a Spark Structured Streaming PySpark job to consume a simulated event stream of real-time sales data from Apache Kafka. High-level architecture for this post’s demonstration Source CodeAll source code for this post and the three previous posts in the Amazon MSK series, including the Python and PySpark scripts demonstrated herein, are open-sourced and located on GitHub. For additional security and cost savings, use a VPC endpoint for private communications between Amazon EMR and Amazon S3. Ensure you expose the correct ingress ports and the corresponding CIDR ranges within your Amazon EMR, Amazon MSK, and Amazon EKS Security Groups. The three VPCs are connected using VPC Peering. Ensure the Amazon MSK Configuration has auto.create.topics.enable=true this setting is false by default The architectural diagram below shows that the demonstration uses three separate VPCs within the same AWS account and AWS Region us-east-1, for Amazon EMR, Amazon MSK, and Amazon EKS. The schemas for the Kafka message keys and values and the schemas for the CSV-format sales and sales regions data will all be stored in Apricurio Registry. DataOps pipeline demonstrated in this postKafka messages will be written in Apache Avro format. Finally, a batch query will consume the aggregated results from Kafka and display the sales results in the console. Next, we will continuously stream those aggregated results back to Kafka. There are three PySpark scripts and one new helper Python script covered in this post: PySpark supports most of Spark’s features such as Spark SQL, DataFrame, Streaming, MLlib (Machine Learning), and Spark Core. PySpark allows you to write Spark applications using the Python API. PySpark ScriptsPySpark, according to the documentation, is an interface for Apache Spark in Python. Kafka message keys are not required, nor is it necessary to store both the key and the value in a common format of Avro in Kafka.Schema evolution, compatibility, and validation are important considerations, but out of scope for this post. This is only done for demonstration purposes and not a requirement. Hitmanpro 38x serial key12_streaming_enrichment_avro. 11_incremental_sales_avro.py: PySpark script simulates an event stream of sales data being published to Kafka over 15–20 minutes

0 Comments

Leave a Reply. |

AuthorVictoria ArchivesCategories |

RSS Feed

RSS Feed